Upgrade to iOS 11 this September, and your device will learn all about you to ease your day – but keep what it learns to itself

‘Machine learning’. ‘Deep learning’. ‘Computer vision’. These phrases came up so often during Apple’s WWDC 2017 keynote that if they were part of a drinking game, you’d have ended up under the table before the first hour was done.

But these terms aren’t empty buzzwords – they sit at the heart of where Apple sees the future of the iPhone, along with the wider ecosystem of devices and services that sits alongside it. In short, they’re all about your device doing more work for you, invisibly, and without you even realising you wanted the work done in the first place.

Creature of habit

This future is all about context and habits. The idea is that rather than you having to manually perform tasks, your iPhone will automate specific actions based on what it learns about you. The more you use your iPhone, the more useful it will become – and those changes can happen surprisingly quickly, often helping with whatever you decide to do next.

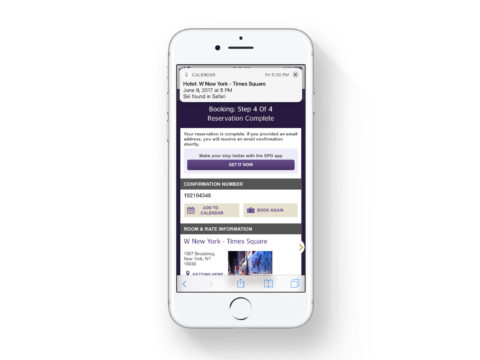

For example, spend time in Safari looking at a holiday destination, and Siri will spot your interest. Next time you fire up News, it might recommend stories about that location. As your iPhone notes the vocabulary of the things you’re reading, switch to Messages and autocomplete will prioritize words relating to your recent reading matter. Book the holiday in Safari and your iPhone will offer to add it to your calendar.

Perfect memories

This personalisation extends to cherished memories. Already, Photos in iOS will automate albums in the Memories tab, but its abilities are mostly limited to locations and times. Through machine learning, Apple says in iOS 11 Photos will be able to scan in a much smarter manner, picking out individual events.

Instead of ‘best of last month’, then, you should soon be able to quickly get at automatically created albums based on things like sporting events and weddings. Through ‘computer vision’, your iPhone will better identify not only scenes but also family members, from children to pets. Similar technology should also greatly improve the fun you can have with Live Photos, creating seamless loops that can play forever.

Deeper learning

Whatever device you’re using, Apple intends for these smarter behaviours to improve your user experience. If you’re driving, the iPhone will now figure this out, and at the end of the journey offer to turn on ‘do not disturb while driving’ in future. (This feature blacks out the screen, but can be ‘broken through’ if someone uses a user-defined keyword in a message such as ‘urgent’.)

In Apple Watch’s upcoming watchOS revamp, Siri again makes an appearance, most notably in a new watch face that places the information you need front and centre at all times. Through machine learning based on your routine, it will display at the most opportune moments appointments, commute times, reminders, sunset times, and HomeKit controls. The aim is to keep what you see relevant, cutting down on noise, and ensuring your devices save you time rather than robbing you of it.

Keep it private

The offset to such convenience is usually privacy – or rather the lack of it. Companies tend to use what they learn about you in order to sell you things, or make money through the sale of bulk semi-anonymised data. Apple has no truck with that. It wants to sell you more hardware, and reasons one way to do that is to respect your privacy.

This is why although your iPhone will soon learn more about you, it won’t be sharing what it discovers with other people and organisations. What Siri learns is protected by end-to-end encryption, readable only by you and your devices.

Perhaps that’s the best aspect of these new features – after all, if your iPhone’s about to transform into a capable, intelligent, genuinely helpful, tiny robot butler, that’s only a good thing if it then doesn’t spend its downtime blabbing about you to all and sundry.