Let’s talk about the Cambridge Analytica data harvesting controversy. That is, the shocking revelation that Facebook was complicit in allowing millions of users’ personal data to be sold to a modern-day propaganda machine.

Data harvesting

For those unsure of how this happened, here’s a quick refresher: in 2014, a quiz app called “This Is Your Digital Life” scraped the public profile data of everyone who logged into the app via Facebook. Thanks to the way Facebook’s systems worked at the time, the app was also able to scrape information about each user’s contacts, too. (Thankfully, this kind of data extraction is no longer possible.)

87 million user profiles were later sold to Cambridge Analytica, a political consulting firm that uses shady data mining techniques to inform advertising and election campaign promotions. Although Facebook never intended user profiles to be harvested in this manner, it certainly didn’t do enough to prevent it from happening, and it didn’t inform users after the breach was discovered. It took a further four years for the news to go public – hence the whirlwind of stories over the past few weeks, and Facebook CEO Mark Zuckerberg’s recent testimony in Congress.

But how exactly does it all affect you, and can you trust Facebook anymore? If nothing else, this story is a warning – an eye-opening indication that we need to keep better track of our online privacy.

Targeted ads

Facebook uses a network of website tracking techniques and old-school data harvesting from third parties to learn things like your income, your political leanings, where you work, whether you’re a homeowner, what kind of clothes you buy, and more. Add this to the information you voluntarily upload, like photos and relationship statuses, and you can see that Facebook has a pretty good picture of each of its users. Ultimately, most of this data is used to target ads.

Facebook is a free product because it monetizes its users, in much the same way Google has done for years. That doesn’t make Facebook or Google intrinsically bad – there’s a certain nobleness in trying to make a service everyone can afford – but it does mean those companies need to work harder to keep our trust, and should be held accountable when they make mistakes. And Facebook has made some pretty big mistakes.

It’s particularly interesting to compare this to Apple’s privacy strategy. Apple’s business model runs almost exclusively on premium hardware sales. It doesn’t collect user profiles, and it doesn’t sell targeted ads. It’s just not interested in your personal data – in fact, Apple’s dedication to privacy and security is one of the best aspects of iPhone ownership. It’s a huge advantage over competing smartphones, and one that doesn’t always get much airtime. (That said, Apple’s refusal to mine every possible vein of user interaction is also the reason Siri is lagging behind Alexa and Google Assistant in the smarts department.)

Public shaming

It may be tempting to delete Facebook as an act of protest, but it’s unlikely to make much difference to the company’s bottom line – and it won’t get back any data that has already been scraped. Not to mention the fact that behind all this bad press is a service that’s genuinely very useful for a vast number of people. Facebook also owns Instagram and Whatsapp – do you really want to give up all three of those apps entirely?

A far better outcome would be for Facebook to learn from its public shaming and make significant changes to how it operates going forward. It’s promising to do better, and we should give it time to deliver. This whole saga has been a huge PR nightmare for Zuckerberg’s team, and the last thing they want is to be caught out again. Putting Facebook under the spotlight will hopefully force the firm to get its act together and finally start protecting our data properly.

This is already starting to happen, with Facebook locking down the access granted to other apps through its platform. It has also promised to start filtering untrusted political ads, labeling them to let users know who paid for each ad. With the US midterms approaching, this kind of transparency is increasingly important to avoid the spread of misinformation.

Making changes

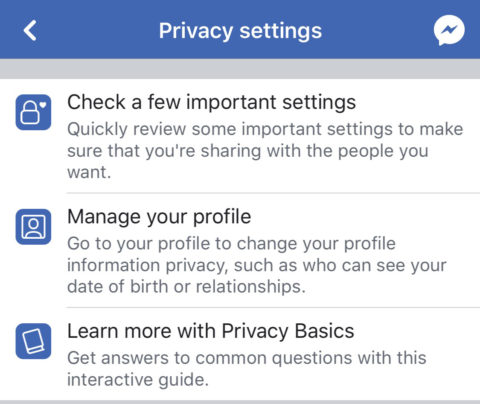

As for what you can do personally? Pay more attention to your privacy settings, and to the amount of control you cede to unknown companies. Limit the information you make public on your account. (Open Facebook and go to Settings > Account Settings > Privacy to make changes.)

Now is also a good time to reconsider any apps you’ve linked to your account, and delete those you no longer trust. Apps that allow you to login through Facebook gain access to your public profile, so it’s worth being careful about who you allow access to. Unless you’re signing into an app you trust fully, it’s better to avoid linking it with your Facebook account. After all, that’s exactly how Cambridge Analytica ended up absconding with 87m user profiles.

If you want to go further, you can even download an archive of every scrap of data Facebook has on you. It’s a bit creepy to see everything you’ve ever posted (and more) laid out in one huge document, but it’s easy to do and certainly makes for an interesting read. (From Facebook, press Settings and then Download a copy of your Facebook Data.) That way, even if you decide to delete the social network for good, you’ll still have access to all those embarrassing statuses you wrote ten years ago.